Simple Opengl Program to Draw a Circle

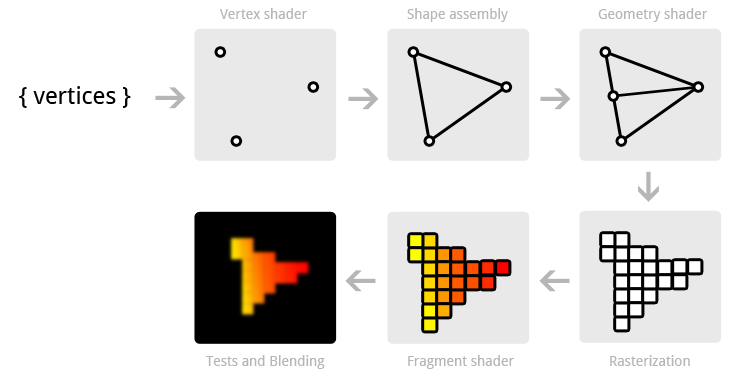

The graphics pipeline

By learning OpenGL, yous've decided that you want to do all of the hard work yourself. That inevitably means that you'll be thrown in the deep, but once you empathise the essentials, y'all'll see that doing things the hard way doesn't have to exist then hard after all. To summit that all, the exercises at the cease of this chapter will bear witness you lot the sheer corporeality of control you accept over the rendering process by doing things the modern style!

The graphics pipeline covers all of the steps that follow each other upward on processing the input data to get to the final output image. I'll explicate these steps with help of the post-obit illustration.

Information technology all begins with the vertices, these are the points from which shapes like triangles volition later exist constructed. Each of these points is stored with certain attributes and it'southward up to you to decide what kind of attributes you want to store. Normally used attributes are 3D position in the globe and texture coordinates.

The vertex shader is a pocket-sized plan running on your graphics menu that processes every one of these input vertices individually. This is where the perspective transformation takes place, which projects vertices with a 3D world position onto your 2D screen! It also passes important attributes like colour and texture coordinates further down the pipeline.

Afterward the input vertices take been transformed, the graphics card volition form triangles, lines or points out of them. These shapes are called primitives because they class the basis of more complex shapes. At that place are some additional drawing modes to cull from, like triangle strips and line strips. These reduce the number of vertices you need to pass if you want to create objects where each next primitive is continued to the last i, like a continuous line consisting of several segments.

The following step, the geometry shader, is completely optional and was only recently introduced. Different the vertex shader, the geometry shader can output more information than comes in. It takes the primitives from the shape associates stage every bit input and can either pass a primitive through down to the residue of the pipeline, modify information technology starting time, completely discard it or even supplant it with other archaic(southward). Since the communication betwixt the GPU and the balance of the PC is relatively wearisome, this phase can help you lot reduce the amount of data that needs to be transferred. With a voxel game for instance, you could pass vertices equally point vertices, forth with an attribute for their globe position, color and material and the actual cubes tin can exist produced in the geometry shader with a betoken every bit input!

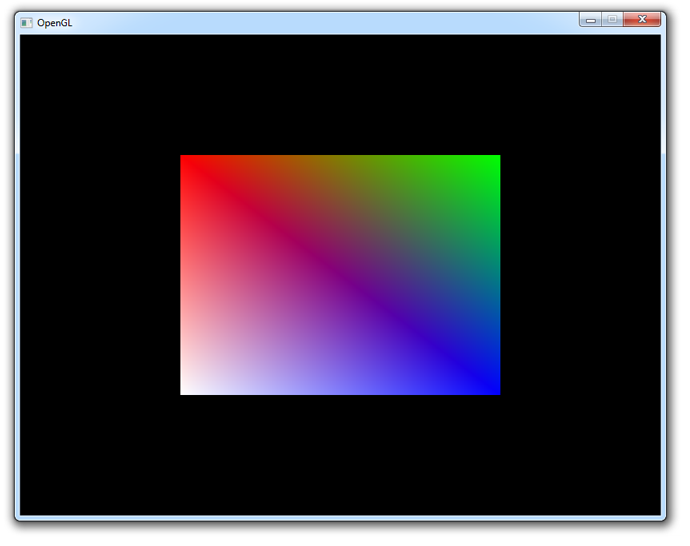

After the final list of shapes is composed and converted to screen coordinates, the rasterizer turns the visible parts of the shapes into pixel-sized fragments. The vertex attributes coming from the vertex shader or geometry shader are interpolated and passed as input to the fragment shader for each fragment. Every bit you tin run across in the paradigm, the colors are smoothly interpolated over the fragments that brand up the triangle, even though only three points were specified.

The fragment shader processes each individual fragment along with its interpolated attributes and should output the concluding color. This is commonly washed by sampling from a texture using the interpolated texture coordinate vertex attributes or simply outputting a color. In more than advanced scenarios, there could also exist calculations related to lighting and shadowing and special effects in this program. The shader also has the ability to discard certain fragments, which means that a shape will be meet-through there.

Finally, the end result is equanimous from all these shape fragments past blending them together and performing depth and stencil testing. All you need to know near these final 2 correct now, is that they let y'all to use additional rules to throw away certain fragments and let others pass. For instance, if i triangle is obscured by another triangle, the fragment of the closer triangle should cease up on the screen.

At present that you lot know how your graphics card turns an array of vertices into an paradigm on the screen, let'south go to piece of work!

Vertex input

The commencement thing you have to decide on is what information the graphics carte is going to demand to draw your scene correctly. As mentioned above, this data comes in the form of vertex attributes. You're free to come up up with any kind of attribute you lot want, but it all inevitably begins with the world position. Whether you're doing 2D graphics or 3D graphics, this is the aspect that will determine where the objects and shapes end up on your screen in the end.

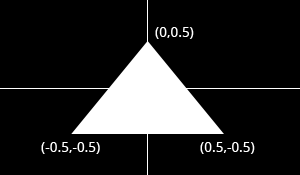

Device coordinates

When your vertices take been candy by the pipeline outlined higher up, their coordinates volition have been transformed into device coordinates. Device X and Y coordinates are mapped to the screen between -1 and 1.

>

Just similar a graph, the center has coordinates

(0,0)and the y axis is positive above the eye. This seems unnatural considering graphics applications commonly accept(0,0)in the top-left corner and(width,tiptop)in the lesser-correct corner, simply it'due south an fantabulous style to simplify 3D calculations and to stay resolution independent.

The triangle above consists of 3 vertices positioned at (0,0.5), (0.v,-0.5) and (-0.5,-0.5) in clockwise order. It is clear that the just variation betwixt the vertices here is the position, so that's the but attribute we demand. Since we're passing the device coordinates directly, an X and Y coordinate suffices for the position.

OpenGL expects you to send all of your vertices in a single array, which may be confusing at first. To understand the format of this array, let's see what it would look similar for our triangle.

float vertices[] = { 0.0f, 0.5f, // Vertex ane (Ten, Y) 0.5f, -0.5f, // Vertex 2 (X, Y) -0.5f, -0.5f // Vertex 3 (X, Y) }; Every bit you can see, this assortment should simply be a list of all vertices with their attributes packed together. The gild in which the attributes appear doesn't thing, equally long as information technology's the aforementioned for each vertex. The guild of the vertices doesn't have to be sequential (i.e. the order in which shapes are formed), only this requires us to provide extra data in the form of an element buffer. This will be discussed at the end of this chapter as information technology would just complicate things for now.

The side by side step is to upload this vertex data to the graphics card. This is of import because the memory on your graphics bill of fare is much faster and you won't have to ship the information over again every fourth dimension your scene needs to exist rendered (about 60 times per 2nd).

This is done by creating a Vertex Buffer Object (VBO):

GLuint vbo; glGenBuffers(1, &vbo); // Generate ane buffer The memory is managed by OpenGL, and so instead of a pointer you get a positive number every bit a reference to it. GLuint is simply a cross-platform substitute for unsigned int, merely like GLint is one for int. You lot will need this number to make the VBO active and to destroy it when you lot're done with information technology.

To upload the actual data to it you first have to get in the agile object by calling glBindBuffer:

glBindBuffer(GL_ARRAY_BUFFER, vbo); As hinted by the GL_ARRAY_BUFFER enum value in that location are other types of buffers, simply they are not important right at present. This statement makes the VBO nosotros simply created the active assortment buffer. Now that information technology's active we can re-create the vertex data to it.

glBufferData(GL_ARRAY_BUFFER, sizeof(vertices), vertices, GL_STATIC_DRAW); Find that this part doesn't refer to the id of our VBO, simply instead to the agile array buffer. The second parameter specifies the size in bytes. The terminal parameter is very important and its value depends on the usage of the vertex information. I'll outline the ones related to drawing here:

-

GL_STATIC_DRAW: The vertex data volition be uploaded once and drawn many times (e.one thousand. the world). -

GL_DYNAMIC_DRAW: The vertex data will be created once, changed from time to time, but drawn many times more than that. -

GL_STREAM_DRAW: The vertex data will be uploaded once and drawn once.

This usage value volition decide in what kind of memory the data is stored on your graphics card for the highest efficiency. For example, VBOs with GL_STREAM_DRAW equally blazon may store their data in retentivity that allows faster writing in favour of slightly slower drawing.

The vertices with their attributes have been copied to the graphics card now, but they're non quite ready to be used yet. Remember that we can make upward any kind of attribute we desire and in any gild, then now comes the moment where you have to explain to the graphics card how to handle these attributes. This is where you'll see how flexible modern OpenGL really is.

Shaders

Every bit discussed earlier, there are three shader stages your vertex data will pass through. Each shader stage has a strictly defined purpose and in older versions of OpenGL, you lot could but slightly tweak what happened and how information technology happened. With modern OpenGL, it's up to us to instruct the graphics card what to do with the information. This is why it's possible to make up one's mind per awarding what attributes each vertex should accept. You'll accept to implement both the vertex and fragment shader to get something on the screen, the geometry shader is optional and is discussed after.

Shaders are written in a C-style linguistic communication chosen GLSL (OpenGL Shading Language). OpenGL will compile your program from source at runtime and copy information technology to the graphics bill of fare. Each version of OpenGL has its ain version of the shader language with availability of a certain feature set and we volition be using GLSL one.50. This version number may seem a bit off when we're using OpenGL three.2, but that's because shaders were only introduced in OpenGL two.0 as GLSL 1.ten. Starting from OpenGL three.3, this problem was solved and the GLSL version is the same as the OpenGL version.

Vertex shader

The vertex shader is a program on the graphics card that processes each vertex and its attributes every bit they appear in the vertex array. Its duty is to output the final vertex position in device coordinates and to output any information the fragment shader requires. That's why the 3D transformation should take place hither. The fragment shader depends on attributes similar the color and texture coordinates, which will commonly be passed from input to output without any calculations.

Remember that our vertex position is already specified every bit device coordinates and no other attributes exist, then the vertex shader volition be fairly bare bones.

#version 150 core in vec2 position; void principal() { gl_Position = vec4(position, 0.0, i.0); } The #version preprocessor directive is used to bespeak that the code that follows is GLSL i.fifty code using OpenGL'due south core profile. Next, we specify that there is only one attribute, the position. Autonomously from the regular C types, GLSL has born vector and matrix types identified by vec* and mat* identifiers. The blazon of the values within these constructs is always a bladder. The number after vec specifies the number of components (ten, y, z, westward) and the number subsequently mat specifies the number of rows /columns. Since the position attribute consists of just an X and Y coordinate, vec2 is perfect.

You lot can be quite creative when working with these vertex types. In the example above a shortcut was used to ready the first ii components of the

vec4to those ofvec2. These two lines are equal:gl_Position = vec4(position, 0.0, 1.0); gl_Position = vec4(position.10, position.y, 0.0, 1.0);When you're working with colors, you can also access the private components with

r,g,bandainstead ofx,y,zanddue west. This makes no departure and tin can assist with clarity.

The final position of the vertex is assigned to the special gl_Position variable, because the position is needed for primitive associates and many other congenital-in processes. For these to part correctly, the last value w needs to accept a value of i.0f. Other than that, yous're free to practise anything you want with the attributes and we'll see how to output those when we add color to the triangle subsequently in this affiliate.

Fragment shader

The output from the vertex shader is interpolated over all the pixels on the screen covered by a primitive. These pixels are called fragments and this is what the fragment shader operates on. Just like the vertex shader it has i mandatory output, the final color of a fragment. It's up to you lot to write the code for computing this color from vertex colors, texture coordinates and any other information coming from the vertex shader.

Our triangle just consists of white pixels, then the fragment shader simply outputs that color every time:

#version 150 core out vec4 outColor; void main() { outColor = vec4(1.0, 1.0, one.0, 1.0); } You'll immediately detect that nosotros're not using some born variable for outputting the color, say gl_FragColor. This is because a fragment shader can in fact output multiple colors and nosotros'll see how to handle this when really loading these shaders. The outColor variable uses the blazon vec4, considering each color consists of a red, green, bluish and alpha component. Colors in OpenGL are generally represented equally floating point numbers between 0.0 and 1.0 instead of the common 0 and 255.

Compiling shaders

Compiling shaders is like shooting fish in a barrel once you accept loaded the source code (either from file or every bit a hard-coded string). You can easily include your shader source in the C++ code through C++11 raw string literals:

const char* vertexSource = R"glsl( #version 150 cadre in vec2 position; void main() { gl_Position = vec4(position, 0.0, 1.0); } )glsl"; Just like vertex buffers, creating a shader itself starts with creating a shader object and loading information into it.

GLuint vertexShader = glCreateShader(GL_VERTEX_SHADER); glShaderSource(vertexShader, 1, &vertexSource, Nil); Unlike VBOs, you lot tin can merely pass a reference to shader functions instead of making information technology active or anything like that. The glShaderSource office can take multiple source strings in an array, simply yous'll usually have your source code in one char array. The last parameter tin can comprise an array of source code string lengths, passing Cypher merely makes information technology finish at the nada terminator.

All that's left is compiling the shader into lawmaking that can exist executed by the graphics carte du jour now:

glCompileShader(vertexShader); Exist aware that if the shader fails to compile, east.thousand. because of a syntax fault, glGetError will not report an mistake! See the block beneath for info on how to debug shaders.

Checking if a shader compiled successfully

GLint status; glGetShaderiv(vertexShader, GL_COMPILE_STATUS, &status);If

statusis equal toGL_TRUE, so your shader was compiled successfully.Retrieving the compile log

char buffer[512]; glGetShaderInfoLog(vertexShader, 512, Nothing, buffer);This will store the first 511 bytes + naught terminator of the compile log in the specified buffer. The log may besides report useful warnings even when compiling was successful, so it's useful to cheque it out from fourth dimension to fourth dimension when you develop your shaders.

The fragment shader is compiled in exactly the same style:

GLuint fragmentShader = glCreateShader(GL_FRAGMENT_SHADER); glShaderSource(fragmentShader, ane, &fragmentSource, NULL); glCompileShader(fragmentShader); Once again, be sure to check if your shader was compiled successfully, because it will save you from a headache after.

Combining shaders into a programme

Up until now the vertex and fragment shaders take been two split up objects. While they've been programmed to work together, they aren't really connected yet. This connexion is fabricated by creating a plan out of these two shaders.

GLuint shaderProgram = glCreateProgram(); glAttachShader(shaderProgram, vertexShader); glAttachShader(shaderProgram, fragmentShader); Since a fragment shader is allowed to write to multiple framebuffers, y'all demand to explicitly specify which output is written to which framebuffer. This needs to happen before linking the program. Nevertheless, since this is 0 by default and at that place's merely 1 output right now, the following line of code is not necessary:

glBindFragDataLocation(shaderProgram, 0, "outColor"); Utilize

glDrawBufferswhen rendering to multiple framebuffers, considering only the first output will be enabled past default.

Later on attaching both the fragment and vertex shaders, the connection is made by linking the program. It is immune to make changes to the shaders after they've been added to a program (or multiple programs!), but the actual result will non change until a program has been linked again. It is as well possible to adhere multiple shaders for the same stage (e.yard. fragment) if they're parts forming the whole shader together. A shader object tin can be deleted with glDeleteShader, but it will not really exist removed before it has been detached from all programs with glDetachShader.

glLinkProgram(shaderProgram); To actually start using the shaders in the program, yous just have to call:

glUseProgram(shaderProgram); Just like a vertex buffer, only ane programme can be active at a time.

Making the link between vertex information and attributes

Although nosotros have our vertex data and shaders now, OpenGL still doesn't know how the attributes are formatted and ordered. You kickoff need to retrieve a reference to the position input in the vertex shader:

GLint posAttrib = glGetAttribLocation(shaderProgram, "position"); The location is a number depending on the order of the input definitions. The first and only input position in this example will always have location 0.

With the reference to the input, you lot tin can specify how the information for that input is retrieved from the array:

glVertexAttribPointer(posAttrib, two, GL_FLOAT, GL_FALSE, 0, 0); The kickoff parameter references the input. The second parameter specifies the number of values for that input, which is the same as the number of components of the vec. The third parameter specifies the type of each component and the fourth parameter specifies whether the input values should be normalized between -1.0 and ane.0 (or 0.0 and 1.0 depending on the format) if they aren't floating bespeak numbers.

The last two parameters are arguably the nigh of import hither as they specify how the attribute is laid out in the vertex array. The commencement number specifies the pace, or how many bytes are between each position aspect in the array. The value 0 ways that there is no data in between. This is currently the example as the position of each vertex is immediately followed by the position of the side by side vertex. The last parameter specifies the outset, or how many bytes from the start of the assortment the aspect occurs. Since in that location are no other attributes, this is 0 as well.

Information technology is important to know that this function will shop non only the stride and the offset, but also the VBO that is currently bound to GL_ARRAY_BUFFER. That ways that y'all don't have to explicitly demark the right VBO when the actual drawing functions are called. This also implies that y'all can use a unlike VBO for each attribute.

Don't worry if you don't fully understand this yet, every bit nosotros'll see how to alter this to add more attributes soon enough.

glEnableVertexAttribArray(posAttrib); Last, but not to the lowest degree, the vertex attribute array needs to be enabled.

Vertex Array Objects

Yous can imagine that real graphics programs use many different shaders and vertex layouts to take intendance of a wide variety of needs and special effects. Irresolute the active shader plan is easy plenty with a phone call to glUseProgram, but it would be quite inconvenient if yous had to prepare all of the attributes again every time.

Luckily, OpenGL solves that trouble with Vertex Array Objects (VAO). VAOs store all of the links between the attributes and your VBOs with raw vertex data.

A VAO is created in the same fashion as a VBO:

GLuint vao; glGenVertexArrays(i, &vao); To start using it, simply demark information technology:

glBindVertexArray(vao); Every bit shortly as you've spring a certain VAO, every time you call glVertexAttribPointer, that information will be stored in that VAO. This makes switching between unlike vertex data and vertex formats as easy equally binding a different VAO! Just retrieve that a VAO doesn't shop any vertex data past itself, information technology just references the VBOs you lot've created and how to retrieve the attribute values from them.

Since simply calls later on binding a VAO stick to information technology, make sure that yous've created and bound the VAO at the showtime of your program. Whatsoever vertex buffers and element buffers bound before it volition be ignored.

Drawing

Now that you've loaded the vertex data, created the shader programs and linked the information to the attributes, yous're prepare to draw the triangle. The VAO that was used to store the attribute data is already jump, then you don't have to worry nearly that. All that'southward left is to but call glDrawArrays in your chief loop:

glDrawArrays(GL_TRIANGLES, 0, 3); The kickoff parameter specifies the kind of primitive (commonly point, line or triangle), the second parameter specifies how many vertices to skip at the showtime and the last parameter specifies the number of vertices (not primitives!) to procedure.

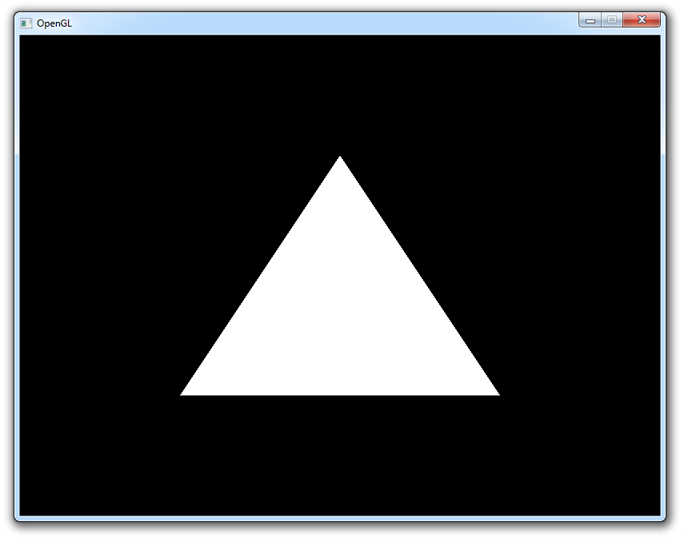

When you run your program now, y'all should meet the following:

If you don't see anything, make sure that the shaders have compiled correctly, that the plan has linked correctly, that the attribute assortment has been enabled, that the VAO has been bound before specifying the attributes, that your vertex data is right and that glGetError returns 0. If you can't find the problem, effort comparing your code to this sample.

Uniforms

Right now the white color of the triangle has been hard-coded into the shader code, but what if you wanted to change information technology afterwards compiling the shader? As it turns out, vertex attributes are not the only fashion to pass information to shader programs. At that place is another way to pass data to the shaders called uniforms. These are substantially global variables, having the same value for all vertices and/or fragments. To demonstrate how to use these, permit's make it possible to modify the color of the triangle from the plan itself.

By making the color in the fragment shader a uniform, it volition cease upward looking similar this:

#version 150 core compatible vec3 triangleColor; out vec4 outColor; void primary() { outColor = vec4(triangleColor, 1.0); } The final component of the output color is transparency, which is not very interesting right now. If yous run your program at present yous'll run into that the triangle is black, because the value of triangleColor hasn't been set yet.

Changing the value of a uniform is just like setting vertex attributes, you first have to grab the location:

GLint uniColor = glGetUniformLocation(shaderProgram, "triangleColor"); The values of uniforms are changed with any of the glUniformXY functions, where X is the number of components and Y is the type. Common types are f (float), d (double) and i (integer).

glUniform3f(uniColor, ane.0f, 0.0f, 0.0f); If you run your program now, you'll meet that the triangle is red. To make things a little more exciting, try varying the colour with the time by doing something similar this in your main loop:

machine t_start = std::chrono::high_resolution_clock::at present(); ... machine t_now = std::chrono::high_resolution_clock::now(); float time = std::chrono::duration_cast<std::chrono::duration<float>>(t_now - t_start).count(); glUniform3f(uniColor, (sin(time * 4.0f) + 1.0f) / 2.0f, 0.0f, 0.0f); Although this example may non exist very exciting, information technology does demonstrate that uniforms are essential for controlling the behaviour of shaders at runtime. Vertex attributes on the other hand are platonic for describing a unmarried vertex.

Run across the code if you have any problem getting this to piece of work.

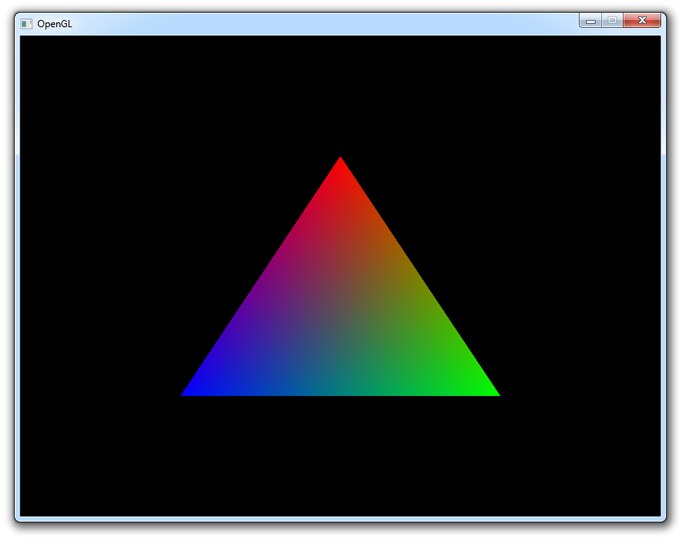

Adding some more colors

Although uniforms take their place, color is something we'd rather similar to specify per corner of the triangle! Allow's add together a color attribute to the vertices to accomplish this.

We'll offset accept to add the extra attributes to the vertex data. Transparency isn't really relevant, so nosotros'll only add the red, dark-green and blue components:

float vertices[] = { 0.0f, 0.5f, 1.0f, 0.0f, 0.0f, // Vertex 1: Red 0.5f, -0.5f, 0.0f, 1.0f, 0.0f, // Vertex two: Light-green -0.5f, -0.5f, 0.0f, 0.0f, 1.0f // Vertex iii: Blueish }; And so we take to modify the vertex shader to take it equally input and laissez passer information technology to the fragment shader:

#version 150 core in vec2 position; in vec3 color; out vec3 Color; void main() { Color = color; gl_Position = vec4(position, 0.0, ane.0); } And Color is added as input to the fragment shader:

#version 150 core in vec3 Color; out vec4 outColor; void main() { outColor = vec4(Color, 1.0); } Brand sure that the output of the vertex shader and the input of the fragment shader have the same name, or the shaders volition non exist linked properly.

At present, we only need to alter the attribute pointer lawmaking a fleck to adapt for the new 10, Y, R, G, B attribute order.

GLint posAttrib = glGetAttribLocation(shaderProgram, "position"); glEnableVertexAttribArray(posAttrib); glVertexAttribPointer(posAttrib, two, GL_FLOAT, GL_FALSE, v*sizeof(float), 0); GLint colAttrib = glGetAttribLocation(shaderProgram, "color"); glEnableVertexAttribArray(colAttrib); glVertexAttribPointer(colAttrib, three, GL_FLOAT, GL_FALSE, 5*sizeof(float), (void*)(two*sizeof(float))); The fifth parameter is set to v*sizeof(float) at present, considering each vertex consists of five floating betoken attribute values. The get-go of two*sizeof(bladder) for the colour aspect is there because each vertex starts with 2 floating signal values for the position that it has to skip over.

And we're done!

You should now have a reasonable understanding of vertex attributes and shaders. If you ran into problems, ask in the comments or accept a look at the altered source code.

Element buffers

Right now, the vertices are specified in the order in which they are drawn. If you lot wanted to add together another triangle, you would have to add iii additional vertices to the vertex assortment. There is a way to command the order, which also enables you to reuse existing vertices. This tin can save you a lot of memory when working with real 3D models afterward on, because each point is usually occupied past a corner of iii triangles!

An chemical element array is filled with unsigned integers referring to vertices bound to GL_ARRAY_BUFFER. If nosotros just desire to depict them in the gild they are in at present, it'll look similar this:

GLuint elements[] = { 0, one, 2 }; They are loaded into video memory through a VBO merely like the vertex data:

GLuint ebo; glGenBuffers(1, &ebo); ... glBindBuffer(GL_ELEMENT_ARRAY_BUFFER, ebo); glBufferData(GL_ELEMENT_ARRAY_BUFFER, sizeof(elements), elements, GL_STATIC_DRAW); The merely matter that differs is the target, which is GL_ELEMENT_ARRAY_BUFFER this time.

To actually make use of this buffer, you'll take to change the describe command:

glDrawElements(GL_TRIANGLES, 3, GL_UNSIGNED_INT, 0); The first parameter is the same every bit with glDrawArrays, merely the other ones all refer to the element buffer. The second parameter specifies the number of indices to draw, the 3rd parameter specifies the type of the chemical element data and the last parameter specifies the offset. The merely real difference is that you're talking about indices instead of vertices now.

To come across how an element buffer can be benign, let's try cartoon a rectangle using two triangles. Nosotros'll start past doing it without an element buffer.

bladder vertices[] = { -0.5f, 0.5f, ane.0f, 0.0f, 0.0f, // Top-left 0.5f, 0.5f, 0.0f, 1.0f, 0.0f, // Top-right 0.5f, -0.5f, 0.0f, 0.0f, 1.0f, // Bottom-right 0.5f, -0.5f, 0.0f, 0.0f, 1.0f, // Bottom-right -0.5f, -0.5f, ane.0f, 1.0f, 1.0f, // Bottom-left -0.5f, 0.5f, 1.0f, 0.0f, 0.0f // Meridian-left }; By calling glDrawArrays instead of glDrawElements like before, the element buffer volition simply be ignored:

glDrawArrays(GL_TRIANGLES, 0, 6); The rectangle is rendered every bit it should, simply the repetition of vertex data is a waste of memory. Using an chemical element buffer allows yous to reuse information:

bladder vertices[] = { -0.5f, 0.5f, ane.0f, 0.0f, 0.0f, // Top-left 0.5f, 0.5f, 0.0f, 1.0f, 0.0f, // Meridian-right 0.5f, -0.5f, 0.0f, 0.0f, 1.0f, // Lesser-right -0.5f, -0.5f, 1.0f, one.0f, 1.0f // Bottom-left }; ... GLuint elements[] = { 0, i, 2, ii, 3, 0 }; ... glDrawElements(GL_TRIANGLES, 6, GL_UNSIGNED_INT, 0); The element buffer even so specifies half dozen vertices to course 2 triangles like before, only now we're able to reuse vertices! This may non seem like much of a big deal at this indicate, simply when your graphics application loads many models into the relatively pocket-size graphics memory, element buffers volition be an important area of optimization.

If you run into trouble, have a wait at the full source code.

This affiliate has covered all of the core principles of cartoon things with OpenGL and it'south absolutely essential that you have a expert understanding of them earlier continuing. Therefore I advise you to do the exercises below before diving into textures.

Exercises

- Alter the vertex shader so that the triangle is upside downwards. (Solution)

- Capsize the colors of the triangle by altering the fragment shader. (Solution)

- Change the programme then that each vertex has simply one color value, determining the shade of gray. (Solution)

Source: https://open.gl/drawing

0 Response to "Simple Opengl Program to Draw a Circle"

Postar um comentário